Many organizations are using AI. Few have governed it.

Policies are drafted but not enforced. Risk classifications exist in spreadsheets but not in practice. Accountability is assumed until something goes wrong.

The EU AI Act enforcement deadline of August 2, 2026, is not a future problem. For organizations with high-risk AI systems, baseline compliance obligations are now in effect. And compliance is only one dimension of trustworthy AI.

This assessment tells you where the gaps are before an auditor, a regulator, or an incident does.

Your personalized results include four deliverables

Overall Maturity Level

Mapped to the five-level Trustworthy AI Governance Maturity Model, from Ad Hoc (Level 1) to Optimized (Level 5).

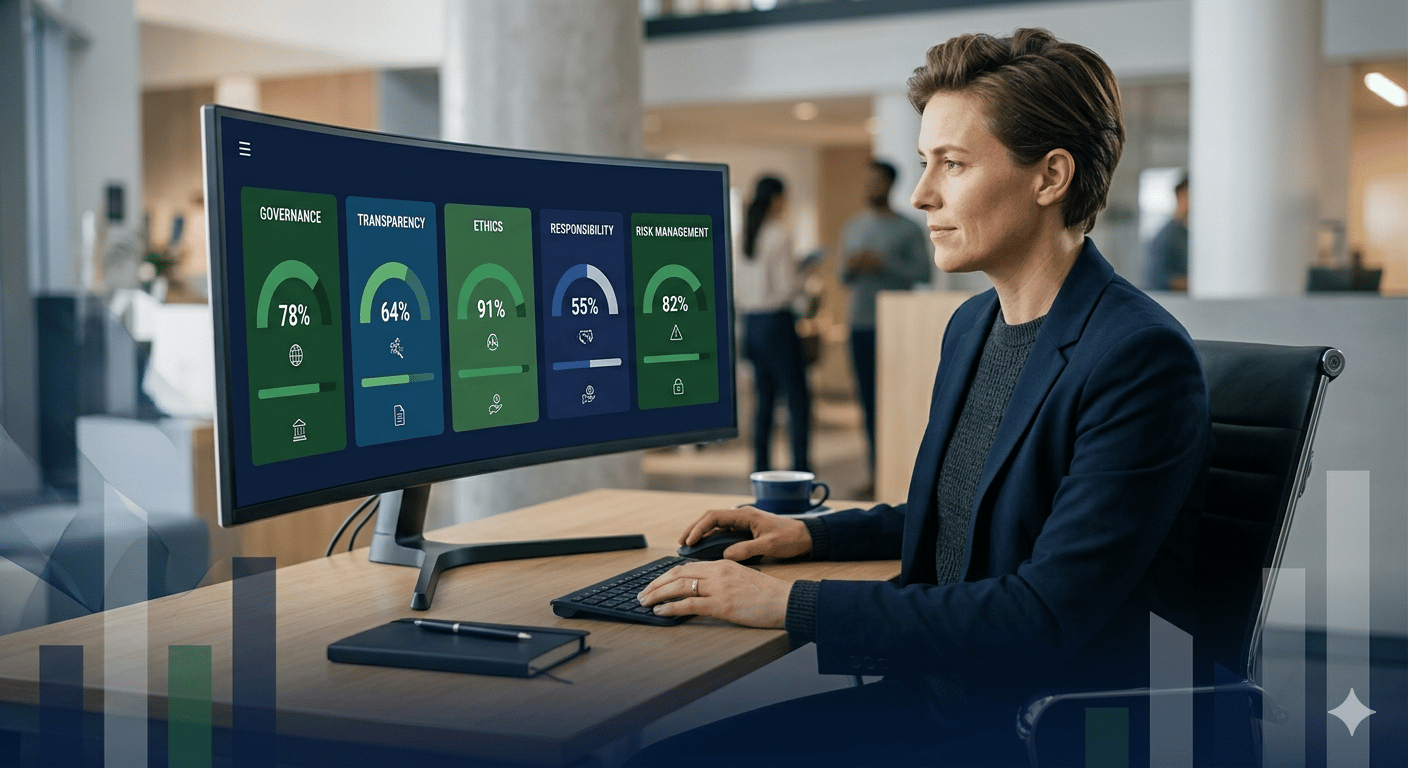

Pillar-by-Pillar Score

Scored across all Trustworthy AI Five Pillars: AI Governance, Transparent AI, Ethical AI, Responsible AI, and AI Risk Management.

Governance Gap Analysis

Identifies which pillars are holding your program back and why.

Targeted Recommendations

Specific next steps and resources matched to your maturity level and highest-priority gaps.